http.server — HTTP servers — Python 3.10.0 documentation

Source code: Lib//

This module defines classes for implementing HTTP servers.

Warning

is not recommended for production. It only implements

basic security checks.

One class, HTTPServer, is a PServer subclass.

It creates and listens at the HTTP socket, dispatching the requests to a

handler. Code to create and run the server looks like this:

def run(server_class=HTTPServer, handler_class=BaseHTTPRequestHandler):

server_address = (”, 8000)

d = server_class(server_address, handler_class)

rve_forever()

class (server_address, RequestHandlerClass)¶

This class builds on the TCPServer class by storing

the server address as instance variables named server_name and

server_port. The server is accessible by the handler, typically

through the handler’s server instance variable.

This class is identical to HTTPServer but uses threads to handle

requests by using the ThreadingMixIn. This

is useful to handle web browsers pre-opening sockets, on which

HTTPServer would wait indefinitely.

New in version 3. 7.

The HTTPServer and ThreadingHTTPServer must be given

a RequestHandlerClass on instantiation, of which this module

provides three different variants:

class (request, client_address, server)¶

This class is used to handle the HTTP requests that arrive at the server. By

itself, it cannot respond to any actual HTTP requests; it must be subclassed

to handle each request method (e. g. GET or POST).

BaseHTTPRequestHandler provides a number of class and instance

variables, and methods for use by subclasses.

The handler will parse the request and the headers, then call a method

specific to the request type. The method name is constructed from the

request. For example, for the request method SPAM, the do_SPAM()

method will be called with no arguments. All of the relevant information is

stored in instance variables of the handler. Subclasses should not need to

override or extend the __init__() method.

BaseHTTPRequestHandler has the following instance variables:

client_address¶

Contains a tuple of the form (host, port) referring to the client’s

address.

server¶

Contains the server instance.

close_connection¶

Boolean that should be set before handle_one_request() returns,

indicating if another request may be expected, or if the connection should

be shut down.

requestline¶

Contains the string representation of the HTTP request line. The

terminating CRLF is stripped. This attribute should be set by

handle_one_request(). If no valid request line was processed, it

should be set to the empty string.

command¶

Contains the command (request type). For example, ‘GET’.

path¶

Contains the request path. If query component of the URL is present,

then path includes the query. Using the terminology of RFC 3986,

path here includes hier-part and the query.

request_version¶

Contains the version string from the request. For example, ‘HTTP/1. 0’.

headers¶

Holds an instance of the class specified by the MessageClass class

variable. This instance parses and manages the headers in the HTTP

request. The parse_headers() function from

is used to parse the headers and it requires that the

HTTP request provide a valid RFC 2822 style header.

rfile¶

An io. BufferedIOBase input stream, ready to read from

the start of the optional input data.

wfile¶

Contains the output stream for writing a response back to the

client. Proper adherence to the HTTP protocol must be used when writing to

this stream in order to achieve successful interoperation with HTTP

clients.

BaseHTTPRequestHandler has the following attributes:

server_version¶

Specifies the server software version. You may want to override this. The

format is multiple whitespace-separated strings, where each string is of

the form name[/version]. For example, ‘BaseHTTP/0. 2’.

sys_version¶

Contains the Python system version, in a form usable by the

version_string method and the server_version class

variable. For example, ‘Python/1. 4’.

error_message_format¶

Specifies a format string that should be used by send_error() method

for building an error response to the client. The string is filled by

default with variables from responses based on the status code

that passed to send_error().

error_content_type¶

Specifies the Content-Type HTTP header of error responses sent to the

client. The default value is ‘text/html’.

protocol_version¶

This specifies the HTTP protocol version used in responses. If set to

‘HTTP/1. 1’, the server will permit HTTP persistent connections;

however, your server must then include an accurate Content-Length

header (using send_header()) in all of its responses to clients.

For backwards compatibility, the setting defaults to ‘HTTP/1. 0′.

MessageClass¶

Specifies an ssage-like class to parse HTTP

headers. Typically, this is not overridden, and it defaults to

responses¶

This attribute contains a mapping of error code integers to two-element tuples

containing a short and long message. For example, {code: (shortmessage,

longmessage)}. The shortmessage is usually used as the message key in an

error response, and longmessage as the explain key. It is used by

send_response_only() and send_error() methods.

A BaseHTTPRequestHandler instance has the following methods:

handle()¶

Calls handle_one_request() once (or, if persistent connections are

enabled, multiple times) to handle incoming HTTP requests. You should

never need to override it; instead, implement appropriate do_*()

methods.

handle_one_request()¶

This method will parse and dispatch the request to the appropriate

do_*() method. You should never need to override it.

handle_expect_100()¶

When a HTTP/1. 1 compliant server receives an Expect: 100-continue

request header it responds back with a 100 Continue followed by 200

OK headers.

This method can be overridden to raise an error if the server does not

want the client to continue. For e. server can choose to send 417

Expectation Failed as a response header and return False.

New in version 3. 2.

send_error(code, message=None, explain=None)¶

Sends and logs a complete error reply to the client. The numeric code

specifies the HTTP error code, with message as an optional, short, human

readable description of the error. The explain argument can be used to

provide more detailed information about the error; it will be formatted

using the error_message_format attribute and emitted, after

a complete set of headers, as the response body. The responses

attribute holds the default values for message and explain that

will be used if no value is provided; for unknown codes the default value

for both is the string???. The body will be empty if the method is

HEAD or the response code is one of the following: 1xx,

204 No Content, 205 Reset Content, 304 Not Modified.

Changed in version 3. 4: The error response includes a Content-Length header.

Added the explain argument.

send_response(code, message=None)¶

Adds a response header to the headers buffer and logs the accepted

request. The HTTP response line is written to the internal buffer,

followed by Server and Date headers. The values for these two headers

are picked up from the version_string() and

date_time_string() methods, respectively. If the server does not

intend to send any other headers using the send_header() method,

then send_response() should be followed by an end_headers()

call.

Changed in version 3. 3: Headers are stored to an internal buffer and end_headers()

needs to be called explicitly.

send_header(keyword, value)¶

Adds the HTTP header to an internal buffer which will be written to the

output stream when either end_headers() or flush_headers() is

invoked. keyword should specify the header keyword, with value

specifying its value. Note that, after the send_header calls are done,

end_headers() MUST BE called in order to complete the operation.

Changed in version 3. 2: Headers are stored in an internal buffer.

send_response_only(code, message=None)¶

Sends the response header only, used for the purposes when 100

Continue response is sent by the server to the client. The headers not

buffered and sent directly the output the message is not

specified, the HTTP message corresponding the response code is sent.

end_headers()¶

Adds a blank line

(indicating the end of the HTTP headers in the response)

to the headers buffer and calls flush_headers().

Changed in version 3. 2: The buffered headers are written to the output stream.

flush_headers()¶

Finally send the headers to the output stream and flush the internal

headers buffer.

New in version 3. 3.

log_request(code=’-‘, size=’-‘)¶

Logs an accepted (successful) request. code should specify the numeric

HTTP code associated with the response. If a size of the response is

available, then it should be passed as the size parameter.

log_error(… )¶

Logs an error when a request cannot be fulfilled. By default, it passes

the message to log_message(), so it takes the same arguments

(format and additional values).

log_message(format,… )¶

Logs an arbitrary message to This is typically overridden

to create custom error logging mechanisms. The format argument is a

standard printf-style format string, where the additional arguments to

log_message() are applied as inputs to the formatting. The client

ip address and current date and time are prefixed to every message logged.

version_string()¶

Returns the server software’s version string. This is a combination of the

server_version and sys_version attributes.

date_time_string(timestamp=None)¶

Returns the date and time given by timestamp (which must be None or in

the format returned by ()), formatted for a message

header. If timestamp is omitted, it uses the current date and time.

The result looks like ‘Sun, 06 Nov 1994 08:49:37 GMT’.

log_date_time_string()¶

Returns the current date and time, formatted for logging.

address_string()¶

Returns the client address.

Changed in version 3. 3: Previously, a name lookup was performed. To avoid name resolution

delays, it now always returns the IP address.

class (request, client_address, server, directory=None)¶

This class serves files from the directory directory and below,

or the current directory if directory is not provided, directly

mapping the directory structure to HTTP requests.

New in version 3. 7: The directory parameter.

Changed in version 3. 9: The directory parameter accepts a path-like object.

A lot of the work, such as parsing the request, is done by the base class

BaseHTTPRequestHandler. This class implements the do_GET()

and do_HEAD() functions.

The following are defined as class-level attributes of

SimpleHTTPRequestHandler:

This will be “SimpleHTTP/” + __version__, where __version__ is

defined at the module level.

extensions_map¶

A dictionary mapping suffixes into MIME types, contains custom overrides

for the default system mappings. The mapping is used case-insensitively,

and so should contain only lower-cased keys.

Changed in version 3. 9: This dictionary is no longer filled with the default system mappings,

but only contains overrides.

The SimpleHTTPRequestHandler class defines the following methods:

do_HEAD()¶

This method serves the ‘HEAD’ request type: it sends the headers it

would send for the equivalent GET request. See the do_GET()

method for a more complete explanation of the possible headers.

do_GET()¶

The request is mapped to a local file by interpreting the request as a

path relative to the current working directory.

If the request was mapped to a directory, the directory is checked for a

file named or (in that order). If found, the

file’s contents are returned; otherwise a directory listing is generated

by calling the list_directory() method. This method uses

stdir() to scan the directory, and returns a 404 error

response if the listdir() fails.

If the request was mapped to a file, it is opened. Any OSError

exception in opening the requested file is mapped to a 404,

‘File not found’ error. If there was a ‘If-Modified-Since’

header in the request, and the file was not modified after this time,

a 304, ‘Not Modified’ response is sent. Otherwise, the content

type is guessed by calling the guess_type() method, which in turn

uses the extensions_map variable, and the file contents are returned.

A ‘Content-type:’ header with the guessed content type is output,

followed by a ‘Content-Length:’ header with the file’s size and a

‘Last-Modified:’ header with the file’s modification time.

Then follows a blank line signifying the end of the headers, and then the

contents of the file are output. If the file’s MIME type starts with

text/ the file is opened in text mode; otherwise binary mode is used.

For example usage, see the implementation of the test() function

invocation in the module.

Changed in version 3. 7: Support of the ‘If-Modified-Since’ header.

The SimpleHTTPRequestHandler class can be used in the following

manner in order to create a very basic webserver serving files relative to

the current directory:

import

import socketserver

PORT = 8000

Handler =

with PServer((“”, PORT), Handler) as d:

print(“serving at port”, PORT)

can also be invoked directly using the -m

switch of the interpreter with a port number argument. Similar to

the previous example, this serves files relative to the current directory:

python -m 8000

By default, server binds itself to all interfaces. The option -b/–bind

specifies a specific address to which it should bind. Both IPv4 and IPv6

addresses are supported. For example, the following command causes the server

to bind to localhost only:

python -m 8000 –bind 127. 0. 1

New in version 3. 4: –bind argument was introduced.

New in version 3. 8: –bind argument enhanced to support IPv6

By default, server uses the current directory. The option -d/–directory

specifies a directory to which it should serve the files. For example,

the following command uses a specific directory:

python -m –directory /tmp/

New in version 3. 7: –directory specify alternate directory

This class is used to serve either files or output of CGI scripts from the

current directory and below. Note that mapping HTTP hierarchic structure to

local directory structure is exactly as in SimpleHTTPRequestHandler.

Note

CGI scripts run by the CGIHTTPRequestHandler class cannot execute

redirects (HTTP code 302), because code 200 (script output follows) is

sent prior to execution of the CGI script. This pre-empts the status

code.

The class will however, run the CGI script, instead of serving it as a file,

if it guesses it to be a CGI script. Only directory-based CGI are used —

the other common server configuration is to treat special extensions as

denoting CGI scripts.

The do_GET() and do_HEAD() functions are modified to run CGI scripts

and serve the output, instead of serving files, if the request leads to

somewhere below the cgi_directories path.

The CGIHTTPRequestHandler defines the following data member:

cgi_directories¶

This defaults to [‘/cgi-bin’, ‘/htbin’] and describes directories to

treat as containing CGI scripts.

The CGIHTTPRequestHandler defines the following method:

do_POST()¶

This method serves the ‘POST’ request type, only allowed for CGI

scripts. Error 501, “Can only POST to CGI scripts”, is output when trying

to POST to a non-CGI url.

Note that CGI scripts will be run with UID of user nobody, for security

reasons. Problems with the CGI script will be translated to error 403.

CGIHTTPRequestHandler can be enabled in the command line by passing

the –cgi option:

python -m –cgi 8000

requests.Session – Python Requests

This document covers some of Requests more advanced features.

Session Objects¶

The Session object allows you to persist certain parameters across

requests. It also persists cookies across all requests made from the

Session instance, and will use urllib3’s connection pooling. So if

you’re making several requests to the same host, the underlying TCP

connection will be reused, which can result in a significant performance

increase (see HTTP persistent connection).

A Session object has all the methods of the main Requests API.

Let’s persist some cookies across requests:

s = ssion()

(”)

r = (”)

print()

# ‘{“cookies”: {“sessioncookie”: “123456789”}}’

Sessions can also be used to provide default data to the request methods. This

is done by providing data to the properties on a Session object:

= (‘user’, ‘pass’)

s. ({‘x-test’: ‘true’})

# both ‘x-test’ and ‘x-test2’ are sent

(”, headers={‘x-test2’: ‘true’})

Any dictionaries that you pass to a request method will be merged with the

session-level values that are set. The method-level parameters override session

parameters.

Note, however, that method-level parameters will not be persisted across

requests, even if using a session. This example will only send the cookies

with the first request, but not the second:

r = (”, cookies={‘from-my’: ‘browser’})

# ‘{“cookies”: {“from-my”: “browser”}}’

# ‘{“cookies”: {}}’

If you want to manually add cookies to your session, use the

Cookie utility functions to manipulate

okies.

Sessions can also be used as context managers:

with ssion() as s:

This will make sure the session is closed as soon as the with block is

exited, even if unhandled exceptions occurred.

Remove a Value From a Dict Parameter

Sometimes you’ll want to omit session-level keys from a dict parameter. To

do this, you simply set that key’s value to None in the method-level

parameter. It will automatically be omitted.

All values that are contained within a session are directly available to you.

See the Session API Docs to learn more.

Request and Response Objects¶

Whenever a call is made to () and friends, you are doing two

major things. First, you are constructing a Request object which will be

sent off to a server to request or query some resource. Second, a Response

object is generated once Requests gets a response back from the server.

The Response object contains all of the information returned by the server and

also contains the Request object you created originally. Here is a simple

request to get some very important information from Wikipedia’s servers:

>>> r = (”)

If we want to access the headers the server sent back to us, we do this:

>>> r. headers

{‘content-length’: ‘56170’, ‘x-content-type-options’: ‘nosniff’, ‘x-cache’:

‘HIT from, MISS from ‘, ‘content-encoding’:

‘gzip’, ‘age’: ‘3080’, ‘content-language’: ‘en’, ‘vary’: ‘Accept-Encoding, Cookie’,

‘server’: ‘Apache’, ‘last-modified’: ‘Wed, 13 Jun 2012 01:33:50 GMT’,

‘connection’: ‘close’, ‘cache-control’: ‘private, s-maxage=0, max-age=0,

must-revalidate’, ‘date’: ‘Thu, 14 Jun 2012 12:59:39 GMT’, ‘content-type’:

‘text/html; charset=UTF-8’, ‘x-cache-lookup’: ‘HIT from,

MISS from ‘}

However, if we want to get the headers we sent the server, we simply access the

request, and then the request’s headers:

>>> quest. headers

{‘Accept-Encoding’: ‘identity, deflate, compress, gzip’,

‘Accept’: ‘*/*’, ‘User-Agent’: ‘python-requests/1. 2. 0’}

Prepared Requests¶

Whenever you receive a Response object

from an API call or a Session call, the request attribute is actually the

PreparedRequest that was used. In some cases you may wish to do some extra

work to the body or headers (or anything else really) before sending a

request. The simple recipe for this is the following:

from requests import Request, Session

s = Session()

req = Request(‘POST’, url, data=data, headers=headers)

prepped = epare()

# do something with

= ‘No, I want exactly this as the body. ‘

# do something with prepped. headers

del prepped. headers[‘Content-Type’]

resp = (prepped,

stream=stream,

verify=verify,

proxies=proxies,

cert=cert,

timeout=timeout)

print(atus_code)

Since you are not doing anything special with the Request object, you

prepare it immediately and modify the PreparedRequest object. You then

send that with the other parameters you would have sent to requests. * or

Session. *.

However, the above code will lose some of the advantages of having a Requests

Session object. In particular,

Session-level state such as cookies will

not get applied to your request. To get a

PreparedRequest with that state

applied, replace the call to epare() with a call to

epare_request(), like this:

req = Request(‘GET’, url, data=data, headers=headers)

prepped = epare_request(req)

= ‘Seriously, send exactly these bytes. ‘

prepped. headers[‘Keep-Dead’] = ‘parrot’

When you are using the prepared request flow, keep in mind that it does not take into account the environment.

This can cause problems if you are using environment variables to change the behaviour of requests.

For example: Self-signed SSL certificates specified in REQUESTS_CA_BUNDLE will not be taken into account.

As a result an SSL: CERTIFICATE_VERIFY_FAILED is thrown.

You can get around this behaviour by explicitly merging the environment settings into your session:

req = Request(‘GET’, url)

# Merge environment settings into session

settings = rge_environment_settings(, {}, None, None, None)

resp = (prepped, **settings)

SSL Cert Verification¶

Requests verifies SSL certificates for HTTPS requests, just like a web browser.

By default, SSL verification is enabled, and Requests will throw a SSLError if

it’s unable to verify the certificate:

>>> (”)

LError: hostname ” doesn’t match either of ‘*. ‘, ”

I don’t have SSL setup on this domain, so it throws an exception. Excellent. GitHub does though:

You can pass verify the path to a CA_BUNDLE file or directory with certificates of trusted CAs:

>>> (”, verify=’/path/to/certfile’)

or persistent:

= ‘/path/to/certfile’

Note

If verify is set to a path to a directory, the directory must have been processed using

the c_rehash utility supplied with OpenSSL.

This list of trusted CAs can also be specified through the REQUESTS_CA_BUNDLE environment variable.

If REQUESTS_CA_BUNDLE is not set, CURL_CA_BUNDLE will be used as fallback.

Requests can also ignore verifying the SSL certificate if you set verify to False:

>>> (”, verify=False)

Note that when verify is set to False, requests will accept any TLS

certificate presented by the server, and will ignore hostname mismatches

and/or expired certificates, which will make your application vulnerable to

man-in-the-middle (MitM) attacks. Setting verify to False may be useful

during local development or testing.

By default, verify is set to True. Option verify only applies to host certs.

Client Side Certificates¶

You can also specify a local cert to use as client side certificate, as a single

file (containing the private key and the certificate) or as a tuple of both

files’ paths:

>>> (”, cert=(‘/path/’, ‘/path/’))

= ‘/path/’

If you specify a wrong path or an invalid cert, you’ll get a SSLError:

>>> (”, cert=’/wrong_path/’)

SSLError: [Errno 336265225] _ssl. c:347: error:140B0009:SSL routines:SSL_CTX_use_PrivateKey_file:PEM lib

Warning

The private key to your local certificate must be unencrypted.

Currently, Requests does not support using encrypted keys.

CA Certificates¶

Requests uses certificates from the package certifi. This allows for users

to update their trusted certificates without changing the version of Requests.

Before version 2. 16, Requests bundled a set of root CAs that it trusted,

sourced from the Mozilla trust store. The certificates were only updated

once for each Requests version. When certifi was not installed, this led to

extremely out-of-date certificate bundles when using significantly older

versions of Requests.

For the sake of security we recommend upgrading certifi frequently!

Body Content Workflow¶

By default, when you make a request, the body of the response is downloaded

immediately. You can override this behaviour and defer downloading the response

body until you access the ntent

attribute with the stream parameter:

tarball_url = ”

r = (tarball_url, stream=True)

At this point only the response headers have been downloaded and the connection

remains open, hence allowing us to make content retrieval conditional:

if int(r. headers[‘content-length’]) < TOO_LONG:

content = ntent...

You can further control the workflow by use of the er_content()

and er_lines() methods.

Alternatively, you can read the undecoded body from the underlying

urllib3 TPResponse at

If you set stream to True when making a request, Requests cannot

release the connection back to the pool unless you consume all the data or call

This can lead to

inefficiency with connections. If you find yourself partially reading request

bodies (or not reading them at all) while using stream=True, you should

make the request within a with statement to ensure it’s always closed:

with ('', stream=True) as r:

# Do things with the response here.

Keep-Alive¶

Excellent news — thanks to urllib3, keep-alive is 100% automatic within a session!

Any requests that you make within a session will automatically reuse the appropriate

connection!

Note that connections are only released back to the pool for reuse once all body

data has been read; be sure to either set stream to False or read the

content property of the Response object.

Streaming Uploads¶

Requests supports streaming uploads, which allow you to send large streams or

files without reading them into memory. To stream and upload, simply provide a

file-like object for your body:

with open('massive-body', 'rb') as f:

('', data=f)

It is strongly recommended that you open files in binary

mode. This is because Requests may attempt to provide

the Content-Length header for you, and if it does this value

will be set to the number of bytes in the file. Errors may occur

if you open the file in text mode.

Chunk-Encoded Requests¶

Requests also supports Chunked transfer encoding for outgoing and incoming requests.

To send a chunk-encoded request, simply provide a generator (or any iterator without

a length) for your body:

def gen():

yield 'hi'

yield 'there'

('', data=gen())

For chunked encoded responses, it’s best to iterate over the data using

er_content(). In

an ideal situation you’ll have set stream=True on the request, in which

case you can iterate chunk-by-chunk by calling iter_content with a chunk_size

parameter of None. If you want to set a maximum size of the chunk,

you can set a chunk_size parameter to any integer.

POST Multiple Multipart-Encoded Files¶

You can send multiple files in one request. For example, suppose you want to

upload image files to an HTML form with a multiple file field ‘images’:

To do that, just set files to a list of tuples of (form_field_name, file_info):

>>> url = ”

>>> multiple_files = [… (‘images’, (”, open(”, ‘rb’), ‘image/png’)),… (‘images’, (”, open(”, ‘rb’), ‘image/png’))]

>>> r = (url, files=multiple_files)

>>>

{…

‘files’: {‘images’: ‘data:image/png;base64, iVBORw…. ‘}

‘Content-Type’: ‘multipart/form-data; boundary=3131623adb2043caaeb5538cc7aa0b3a’,… }

Event Hooks¶

Requests has a hook system that you can use to manipulate portions of

the request process, or signal event handling.

Available hooks:

response:

The response generated from a Request.

You can assign a hook function on a per-request basis by passing a

{hook_name: callback_function} dictionary to the hooks request

parameter:

hooks={‘response’: print_url}

That callback_function will receive a chunk of data as its first

argument.

def print_url(r, *args, **kwargs):

Your callback function must handle its own exceptions. Any unhandled exception wont be pass silently and thus should be handled by the code calling Requests.

If the callback function returns a value, it is assumed that it is to

replace the data that was passed in. If the function doesn’t return

anything, nothing else is affected.

def record_hook(r, *args, **kwargs):

r. hook_called = True

return r

Let’s print some request method arguments at runtime:

>>> (”, hooks={‘response’: print_url})

You can add multiple hooks to a single request. Let’s call two hooks at once:

>>> r = (”, hooks={‘response’: [print_url, record_hook]})

>>> r. hook_called

True

You can also add hooks to a Session instance. Any hooks you add will then

be called on every request made to the session. For example:

>>> s = ssion()

>>> [‘response’](print_url)

A Session can have multiple hooks, which will be called in the order

they are added.

Custom Authentication¶

Requests allows you to specify your own authentication mechanism.

Any callable which is passed as the auth argument to a request method will

have the opportunity to modify the request before it is dispatched.

Authentication implementations are subclasses of AuthBase,

and are easy to define. Requests provides two common authentication scheme

implementations in HTTPBasicAuth and

HTTPDigestAuth.

Let’s pretend that we have a web service that will only respond if the

X-Pizza header is set to a password value. Unlikely, but just go with it.

from import AuthBase

class PizzaAuth(AuthBase):

“””Attaches HTTP Pizza Authentication to the given Request object. “””

def __init__(self, username):

# setup any auth-related data here

ername = username

def __call__(self, r):

# modify and return the request

r. headers[‘X-Pizza’] = ername

Then, we can make a request using our Pizza Auth:

>>> (”, auth=PizzaAuth(‘kenneth’))

Streaming Requests¶

With er_lines() you can easily

iterate over streaming APIs such as the Twitter Streaming

API. Simply

set stream to True and iterate over the response with

iter_lines:

import json

import requests

r = (”, stream=True)

for line in er_lines():

# filter out keep-alive new lines

if line:

decoded_line = (‘utf-8’)

print((decoded_line))

When using decode_unicode=True with

er_lines() or

er_content(), you’ll want

to provide a fallback encoding in the event the server doesn’t provide one:

if r. encoding is None:

r. encoding = ‘utf-8’

for line in er_lines(decode_unicode=True):

print((line))

iter_lines is not reentrant safe.

Calling this method multiple times causes some of the received data

being lost. In case you need to call it from multiple places, use

the resulting iterator object instead:

lines = er_lines()

# Save the first line for later or just skip it

first_line = next(lines)

for line in lines:

print(line)

Proxies¶

If you need to use a proxy, you can configure individual requests with the

proxies argument to any request method:

proxies = {

”: ”,

”: ”, }

(”, proxies=proxies)

Alternatively you can configure it once for an entire

Session:

session = ssion()

(proxies)

When the proxies configuration is not overridden in python as shown above,

by default Requests relies on the proxy configuration defined by standard

environment variables _proxy, _proxy, no_proxy and

curl_ca_bundle. Uppercase variants of these variables are also supported.

You can therefore set them to configure Requests (only set the ones relevant

to your needs):

$ export HTTP_PROXY=”

$ export HTTPS_PROXY=”

$ python

>>> import requests

To use HTTP Basic Auth with your proxy, use the user:password@host/

syntax in any of the above configuration entries:

$ export HTTPS_PROXY=”user:pass@10. 10. 1. 10:1080″

>>> proxies = {”: ‘user:pass@10. 10:3128/’}

Storing sensitive username and password information in an

environment variable or a version-controlled file is a security risk and is

highly discouraged.

To give a proxy for a specific scheme and host, use the

schemehostname form for the key. This will match for

any request to the given scheme and exact hostname.

proxies = {”: ”}

Note that proxy URLs must include the scheme.

Finally, note that using a proxy for connections typically requires your

local machine to trust the proxy’s root certificate. By default the list of

certificates trusted by Requests can be found with:

from import DEFAULT_CA_BUNDLE_PATH

print(DEFAULT_CA_BUNDLE_PATH)

You override this default certificate bundle by setting the standard

curl_ca_bundle environment variable to another file path:

$ export curl_ca_bundle=”/usr/local/myproxy_info/”

$ export _proxy=”

SOCKS¶

New in version 2. 0.

In addition to basic HTTP proxies, Requests also supports proxies using the

SOCKS protocol. This is an optional feature that requires that additional

third-party libraries be installed before use.

You can get the dependencies for this feature from pip:

$ python -m pip install requests[socks]

Once you’ve installed those dependencies, using a SOCKS proxy is just as easy

as using a HTTP one:

”: ‘socks5user:pass@host:port’,

”: ‘socks5user:pass@host:port’}

Using the scheme socks5 causes the DNS resolution to happen on the client, rather than on the proxy server. This is in line with curl, which uses the scheme to decide whether to do the DNS resolution on the client or proxy. If you want to resolve the domains on the proxy server, use socks5h as the scheme.

Compliance¶

Requests is intended to be compliant with all relevant specifications and

RFCs where that compliance will not cause difficulties for users. This

attention to the specification can lead to some behaviour that may seem

unusual to those not familiar with the relevant specification.

Encodings¶

When you receive a response, Requests makes a guess at the encoding to

use for decoding the response when you access the attribute. Requests will first check for an

encoding in the HTTP header, and if none is present, will use

charset_normalizer

or chardet to attempt to

guess the encoding.

If chardet is installed, requests uses it, however for python3

chardet is no longer a mandatory dependency. The chardet

library is an LGPL-licenced dependency and some users of requests

cannot depend on mandatory LGPL-licensed dependencies.

When you install request without specifying [use_chardet_on_py3]] extra,

and chardet is not already installed, requests uses charset-normalizer

(MIT-licensed) to guess the encoding. For Python 2, requests uses only

chardet and is a mandatory dependency there.

The only time Requests will not guess the encoding is if no explicit charset

is present in the HTTP headers and the Content-Type

header contains text. In this situation, RFC 2616 specifies

that the default charset must be ISO-8859-1. Requests follows the

specification in this case. If you require a different encoding, you can

manually set the Response. encoding

property, or use the raw ntent.

HTTP Verbs¶

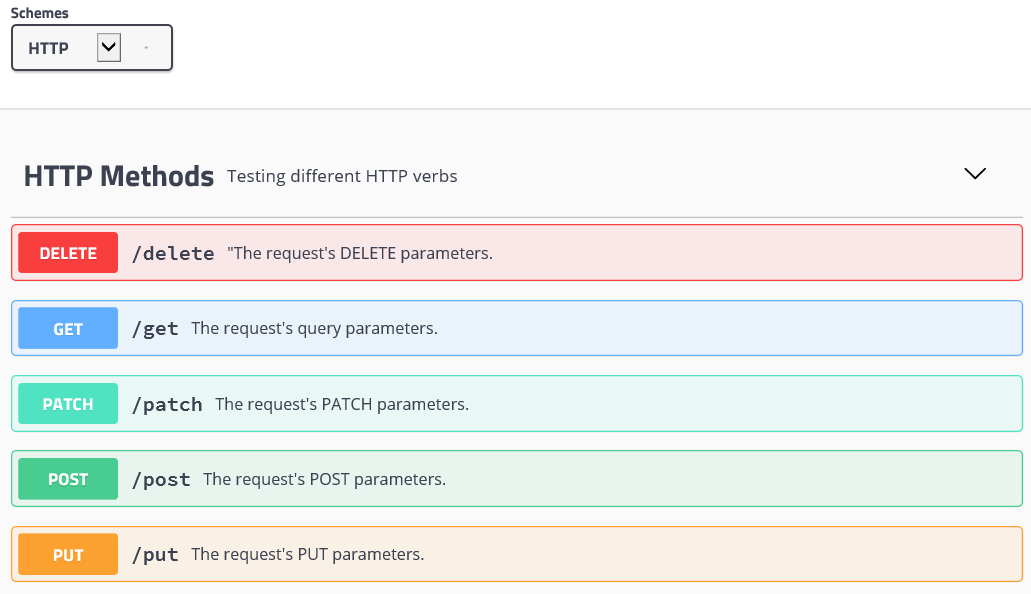

Requests provides access to almost the full range of HTTP verbs: GET, OPTIONS,

HEAD, POST, PUT, PATCH and DELETE. The following provides detailed examples of

using these various verbs in Requests, using the GitHub API.

We will begin with the verb most commonly used: GET. HTTP GET is an idempotent

method that returns a resource from a given URL. As a result, it is the verb

you ought to use when attempting to retrieve data from a web location. An

example usage would be attempting to get information about a specific commit

from GitHub. Suppose we wanted commit a050faf on Requests. We would get it

like so:

We should confirm that GitHub responded correctly. If it has, we want to work

out what type of content it is. Do this like so:

>>> if atus_code ==… print(r. headers[‘content-type’])…

application/json; charset=utf-8

So, GitHub returns JSON. That’s great, we can use the method to parse it into Python objects.

>>> commit_data = ()

>>> print(())

[‘committer’, ‘author’, ‘url’, ‘tree’, ‘sha’, ‘parents’, ‘message’]

>>> print(commit_data[‘committer’])

{‘date’: ‘2012-05-10T11:10:50-07:00′, ’email’: ”, ‘name’: ‘Kenneth Reitz’}

>>> print(commit_data[‘message’])

makin’ history

So far, so simple. Well, let’s investigate the GitHub API a little bit. Now,

we could look at the documentation, but we might have a little more fun if we

use Requests instead. We can take advantage of the Requests OPTIONS verb to

see what kinds of HTTP methods are supported on the url we just used.

>>> verbs = requests. options()

>>> atus_code

500

Uh, what? That’s unhelpful! Turns out GitHub, like many API providers, don’t

actually implement the OPTIONS method. This is an annoying oversight, but it’s

OK, we can just use the boring documentation. If GitHub had correctly

implemented OPTIONS, however, they should return the allowed methods in the

headers, e. g.

>>> verbs = requests. options(”)

>>> print(verbs. headers[‘allow’])

GET, HEAD, POST, OPTIONS

Turning to the documentation, we see that the only other method allowed for

commits is POST, which creates a new commit. As we’re using the Requests repo,

we should probably avoid making ham-handed POSTS to it. Instead, let’s play

with the Issues feature of GitHub.

This documentation was added in response to

Issue #482. Given that

this issue already exists, we will use it as an example. Let’s start by getting it.

200

>>> issue = ()

>>> print(issue[‘title’])

Feature any verb in docs

>>> print(issue[‘comments’])

3

Cool, we have three comments. Let’s take a look at the last of them.

>>> r = ( + ‘/comments’)

>>> comments = ()

>>> print(comments[0]())

[‘body’, ‘url’, ‘created_at’, ‘updated_at’, ‘user’, ‘id’]

>>> print(comments[2][‘body’])

Probably in the “advanced” section

Well, that seems like a silly place. Let’s post a comment telling the poster

that he’s silly. Who is the poster, anyway?

>>> print(comments[2][‘user’][‘login’])

kennethreitz

OK, so let’s tell this Kenneth guy that we think this example should go in the

quickstart guide instead. According to the GitHub API doc, the way to do this

is to POST to the thread. Let’s do it.

>>> body = ({u”body”: u”Sounds great! I’ll get right on it! “})

>>> url = u”

>>> r = (url=url, data=body)

404

Huh, that’s weird. We probably need to authenticate. That’ll be a pain, right?

Wrong. Requests makes it easy to use many forms of authentication, including

the very common Basic Auth.

>>> from import HTTPBasicAuth

>>> auth = HTTPBasicAuth(”, ‘not_a_real_password’)

>>> r = (url=url, data=body, auth=auth)

201

>>> content = ()

>>> print(content[‘body’])

Sounds great! I’ll get right on it.

Brilliant. Oh, wait, no! I meant to add that it would take me a while, because

I had to go feed my cat. If only I could edit this comment! Happily, GitHub

allows us to use another HTTP verb, PATCH, to edit this comment. Let’s do

that.

>>> print(content[u”id”])

5804413

>>> body = ({u”body”: u”Sounds great! I’ll get right on it once I feed my cat. “})

Excellent. Now, just to torture this Kenneth guy, I’ve decided to let him

sweat and not tell him that I’m working on this. That means I want to delete

this comment. GitHub lets us delete comments using the incredibly aptly named

DELETE method. Let’s get rid of it.

>>> r = (url=url, auth=auth)

204

>>> r. headers[‘status’]

‘204 No Content’

Excellent. All gone. The last thing I want to know is how much of my ratelimit

I’ve used. Let’s find out. GitHub sends that information in the headers, so

rather than download the whole page I’ll send a HEAD request to get the

headers.

>>> print(r. headers)…

‘x-ratelimit-remaining’: ‘4995’

‘x-ratelimit-limit’: ‘5000’…

Excellent. Time to write a Python program that abuses the GitHub API in all

kinds of exciting ways, 4995 more times.

Custom Verbs¶

From time to time you may be working with a server that, for whatever reason,

allows use or even requires use of HTTP verbs not covered above. One example of

this would be the MKCOL method some WEBDAV servers use. Do not fret, these can

still be used with Requests. These make use of the built-in. request

method. For example:

>>> r = quest(‘MKCOL’, url, data=data)

200 # Assuming your call was correct

Utilising this, you can make use of any method verb that your server allows.

Transport Adapters¶

As of v1. 0. 0, Requests has moved to a modular internal design. Part of the

reason this was done was to implement Transport Adapters, originally

described here. Transport Adapters provide a mechanism to define interaction

methods for an HTTP service. In particular, they allow you to apply per-service

configuration.

Requests ships with a single Transport Adapter, the HTTPAdapter. This adapter provides the default Requests

interaction with HTTP and HTTPS using the powerful urllib3 library. Whenever

a Requests Session is initialized, one of these is

attached to the Session object for HTTP, and one

for HTTPS.

Requests enables users to create and use their own Transport Adapters that

provide specific functionality. Once created, a Transport Adapter can be

mounted to a Session object, along with an indication of which web services

it should apply to.

>>> (”, MyAdapter())

The mount call registers a specific instance of a Transport Adapter to a

prefix. Once mounted, any HTTP request made using that session whose URL starts

with the given prefix will use the given Transport Adapter.

Many of the details of implementing a Transport Adapter are beyond the scope of

this documentation, but take a look at the next example for a simple SSL use-

case. For more than that, you might look at subclassing the

BaseAdapter.

Example: Specific SSL Version¶

The Requests team has made a specific choice to use whatever SSL version is

default in the underlying library (urllib3). Normally this is fine, but from

time to time, you might find yourself needing to connect to a service-endpoint

that uses a version that isn’t compatible with the default.

You can use Transport Adapters for this by taking most of the existing

implementation of HTTPAdapter, and adding a parameter ssl_version that gets

passed-through to urllib3. We’ll make a Transport Adapter that instructs the

library to use SSLv3:

import ssl

from urllib3. poolmanager import PoolManager

from apters import HTTPAdapter

class Ssl3HttpAdapter(HTTPAdapter):

“”””Transport adapter” that allows us to use SSLv3. “””

def init_poolmanager(self, connections, maxsize, block=False):

self. poolmanager = PoolManager(

num_pools=connections, maxsize=maxsize,

block=block, OTOCOL_SSLv3)

Blocking Or Non-Blocking? ¶

With the default Transport Adapter in place, Requests does not provide any kind

of non-blocking IO. The ntent

property will block until the entire response has been downloaded. If

you require more granularity, the streaming features of the library (see

Streaming Requests) allow you to retrieve smaller quantities of the

response at a time. However, these calls will still block.

If you are concerned about the use of blocking IO, there are lots of projects

out there that combine Requests with one of Python’s asynchronicity frameworks.

Some excellent examples are requests-threads, grequests, requests-futures, and x.

Timeouts¶

Most requests to external servers should have a timeout attached, in case the

server is not responding in a timely manner. By default, requests do not time

out unless a timeout value is set explicitly. Without a timeout, your code may

hang for minutes or more.

The connect timeout is the number of seconds Requests will wait for your

client to establish a connection to a remote machine (corresponding to the

connect()) call on the socket. It’s a good practice to set connect timeouts

to slightly larger than a multiple of 3, which is the default TCP packet

retransmission window.

Once your client has connected to the server and sent the HTTP request, the

read timeout is the number of seconds the client will wait for the server

to send a response. (Specifically, it’s the number of seconds that the client

will wait between bytes sent from the server. In 99. 9% of cases, this is the

time before the server sends the first byte).

If you specify a single value for the timeout, like this:

r = (”, timeout=5)

The timeout value will be applied to both the connect and the read

timeouts. Specify a tuple if you would like to set the values separately:

r = (”, timeout=(3. 05, 27))

If the remote server is very slow, you can tell Requests to wait forever for

a response, by passing None as a timeout value and then retrieving a cup of

coffee.

r = (”, timeout=None)

Python Request: Get & Post HTTP & JSON … – DataCamp

Learn about the basics of HTTP and also about the request library in Python to make different types of requests.

Check out DataCamp’s Importing Data in Python (Part 2) course that covers making HTTP requests.

In this tutorial, we will cover how to download an image, pass an argument to a request, and how to perform a ‘post’ request to post the data to a particular route. Also, you’ll learn how to obtain a JSON response to do a more dynamic operation.

HTTP

Libraries in Python to make HTTP Request

Request in Python

Using GET Request

Downloading and Saving an image using the Request Module

Passing Argument in the Request

Using POST Request

JSON Response

HTTP stands for the ‘HyperText Transfer Protocol, ‘ where communication is possible by request done by the client and the response made by the server.

For example, you can use the client(browser) to search for a ‘dog’ image on Google. Then that sends an HTTP request to the server, i. e., a place where a dog image is hosted, and the response from the server is the status code with the requested content. This is a process also known as a request-response cycle.

You can also look at this article, What is HTTP for a more detailed explanation.

There are many libraries to make an HTTP request in Python, which are lib, urllib, lib2, treq, etc., but requests are the simplest and most well-documented libraries among them all.

You’ll be using the request library for this tutorial and the command for doing this is below:

pip install requests

According to Wikipedia, “requests are a Python HTTP library, released under the Apache2 License. The goal of the project is to make HTTP requests simpler and more human-friendly. The current version is 2. 22. 0″

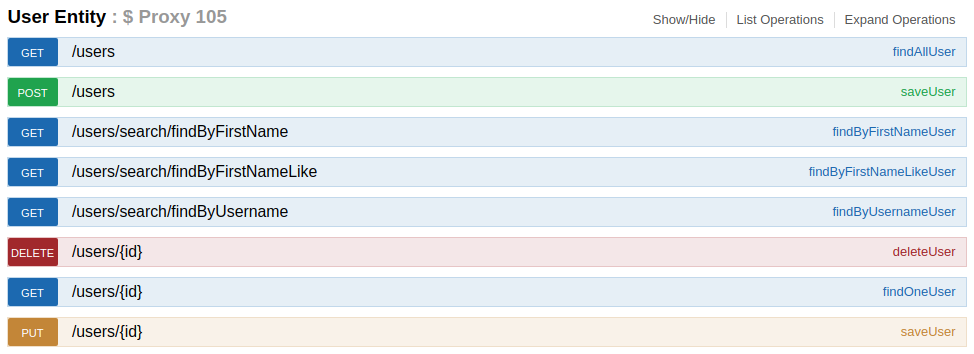

GET request is the most common method and is used to obtain the requested data from the specific server.

You need to import the required modules in your development environment using the following commands:

import requests

You can retrieve the data from the specific resource by using ‘(‘specific_url’)’, and ‘r’ is the response object.

r (”)

Status Code

According to Wikipedia, “Status codes are issued by a server in response to a client’s request made to the server. “. There are lots of other status codes and detailed explanations that can be found here: HTTP Status Code.

However, the two most common status code is explained below:

atus_code

You can see below after running the above code the status code is ‘200’ which is ‘OK, ‘ and a request is successful.

You can view the response headers by using ‘. headers. ‘ where it returns the Python Dictionaries. They return a lot of additional information containing the case insensitive name with resources types along with the server name, version, etc., and are also included with the code shown below.

r. headers

The vital information obtained in the above code is the Server name as ‘Apache’, content type, Encoding, etc.

r. headers[‘Content-Type’]

You can see above the type of content of the header by using ‘content-type’ which is case insensitive, and ‘Content-Type’ would also give the same result as below.

Response Content

You can get the HTML text of a page by using ” where the request can decode any content automatically from the server, and it is in a Unicode form with the code below:

You can get an entire page of HTML and parsing can be done by the help of HTML parser.

‘\r\n\r\n\r\n