Web Scraping Using Node JS in JavaScript – Analytics Vidhya

This article was published as a part of the Data Science Blogathon.

to f

INTRODUCTION

Gathering information across the web is web scraping, also known as Web Data Extraction & Web Harvesting. Nowadays data is like oxygen for startups & freelancers who want to start a business or a project in any domain. Suppose you want to find the price of a product on an eCommerce website. It’s easy to find but now let’s say you have to do this exercise for thousands of products across multiple eCommerce websites. Doing it manually; not a good option at all.

Get to know the Tool

JavaScript is a popular programming language and it runs in any web browser.

Node JS is an interpreter and provides an environment for JavaScript with some specific useful libraries.

In short, Node JS adds several functionality & features to JavaScript in terms of libraries & make it more powerful.

Hands-On-Session

Let’s get to understand web scraping using Node JS with an example. Suppose you want to analyze the price fluctuations of some products on an eCommerce website. Now, you have to list out all the possible factors of the cause & cross-check it with each product. Similarly, when you want to scrape data, then you have to list out parent HTML tags & check respective child HTML tag to extract the data by repeating this activity.

Steps Required for Web Scraping

Creating the file

Install & Call the required libraries

Select the Website & Data needed to Scrape

Set the URL & Check the Response Code

Inspect & Find the Proper HTML tags

Include the HTML tags in our Code

Cross-check the Scraped Data

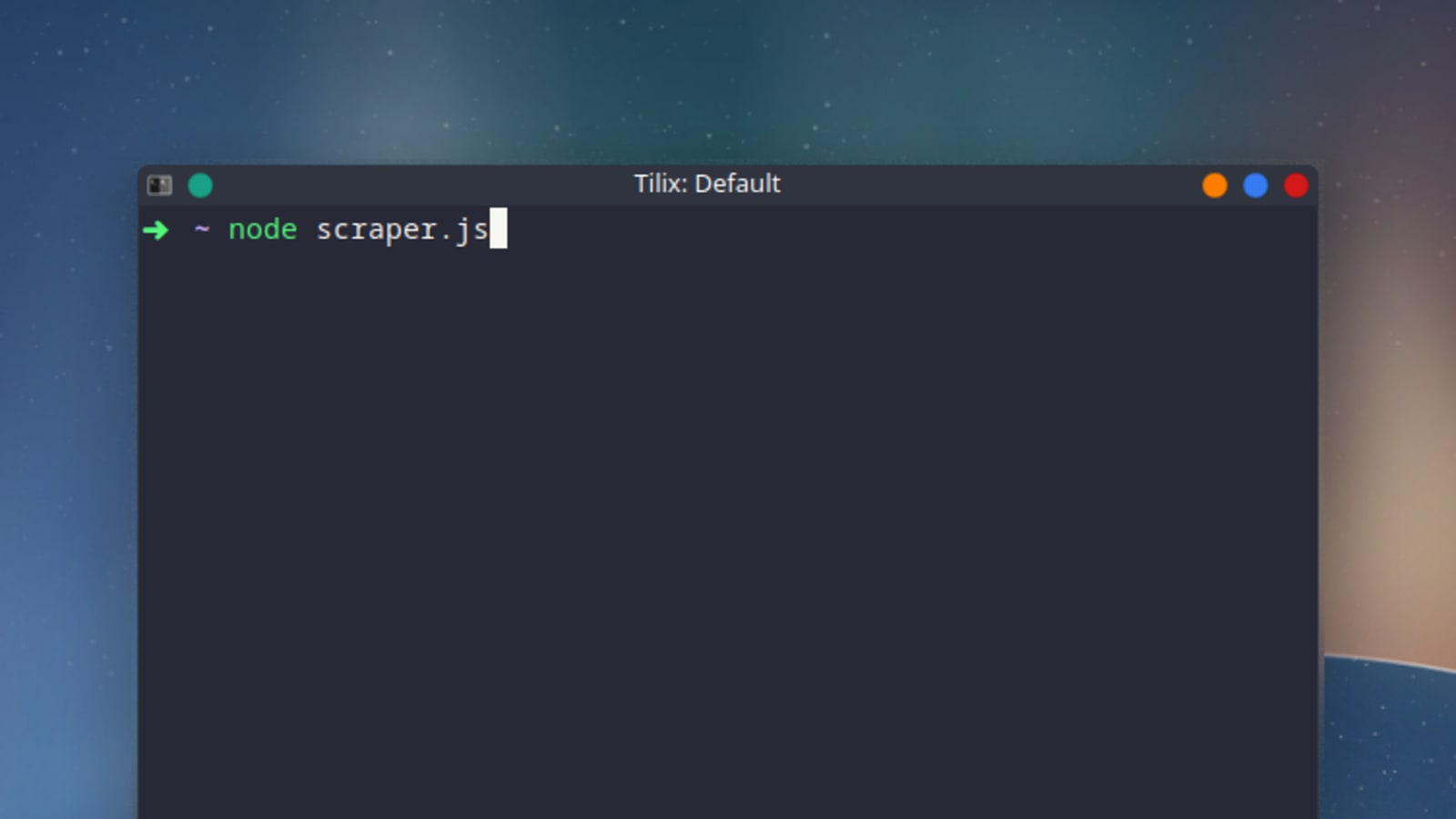

I’m using Visual Studio to run this task.

Step 1- Creating the file

To create a file, I need to run npm init and give a few details as needed in the below screenshot.

Create

Step 2- Install & Call the required libraries

Need to run the below codes to install these libraries.

Install Libraries

Once the libraries are properly installed then you will see these messages are getting displayed.

logs after packages get installed

Call the required libraries:

Call the library

Step 3- Select the Website & Data needed to Scrape.

I picked this website “ and want to scrape data of gold rates along with dates.

Data we want to scrape

Step 4- Set the URL & Check the Response Code

Node JS code looks like this to pass the URL & check the response code.

Passing URL & Getting Response Code

Step 5- Inspect & Find the Proper HTML tags

It’s quite easy to find the proper HTML tags in which your data is present.

To see the HTML tags; right-click and select the inspect option.

Inspecting the HTML Tags

Select proper HTML Tags:-

If you noticed there are three columns in our table, so our HTML tag for table row would be “HeaderRow” & all the column names are present with tag “th” (Table Header).

And for each table row (“tr”) our data resides in “DataRow” HTML tag

Now, I need to get all HTML tags to reside under “HeaderRow” & need to find all the “th” HTML tags & finally iterate through “DataRow” HTML tag to get all the data within it.

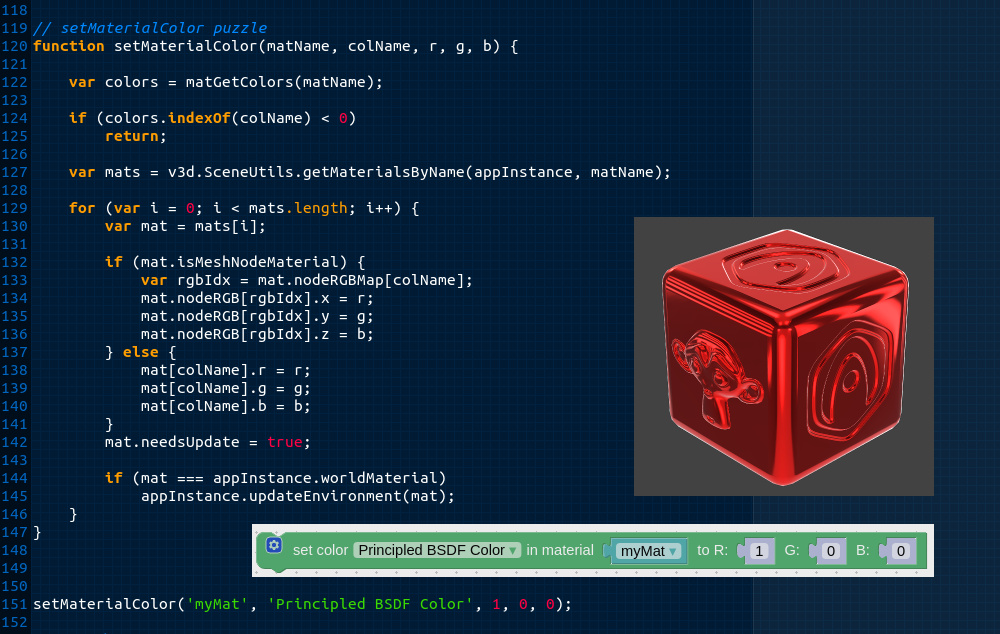

Step 6- Include the HTML tags in our Code

After including the HTML tags, our code will be:-

Code Snippet

Step 7- Cross-check the Scraped Data

Print the Data, so the code for this is like:-

Our Scraped Data

If you go to a more granular level of HTML Tags & iterate them accordingly, you will get more precise data.

That’s all about web scraping & how to get rare quality data like gold.

Conclusion

I tried to explain Web Scraping using Node JS in a precise way. Hopefully, this will help you.

Find full code on

Vgyaan’s–GithubRepo

If you have any questions about the code or web scraping in general, reach out to me on

Vgyaan’s–Linkedin

We will meet again with something new.

Till then,

Happy Coding..!

Web Scraping With Python vs JavaScript – Medium

JavaScript and Python are two of the most popular programming languages today. They’re used for various tasks and functions, including web and mobile development, data science, and web you’re looking to get started with web scraping, you might want to know what the pros and cons of using JavaScript and Python are. In this article, we’ll go through the key reasons why these programming languages are widely used for web scraping. We’ll also take a look at some perks and limitations you’ll need to watch out for before choosing a programming language for your web scraping is mainly used for web scraping as it’s pretty straightforward to get started with. Not only is the syntax quite simple to understand, but there are also thriving Python communities that can help beginners get proficient with this programming language. Besides, Python carries an extensive collection of libraries that aid with the extraction and manipulation of data. A few examples of Python libraries used for web scraping purposes are Beautiful Soup, Scrapy, and Selenium, which are easy to install and use. There are other Python libraries as well, such as Pandas and Numpy, that can be used to handle data retrieved from the cause of the popularity of Python, there are different coding environments and IDEs (Integrated Development Environment) such as Visual Studio Code and PyCharm that support this language. The said programs make it easier for beginners to get started with Python scrape data from a web page with Python, you’ll first need to select a public URL to scrape from. Once you’ve chosen a target, you can navigate to the page and inspect it. After finding the publicly available data you want to extract, you can write the code in Python and run are different ways to extract data from a web page using Python. One method is to use the string methods available in this language, such as find() to search through the HTML text for specific tags. Alternatively, Python supports regular expressions through its ‘re’ module, or you can take advantage of the findall() method to find any text that matches a regular programmers use dedicated HTML parsers such as Beautiful Soup to parse out HTML pages to make the task easier when it comes to data parsing. Another common solution is the Ixml library, which is more flexible than Beautiful Soup and is often used in conjunction with the Python Requests library, a powerful tool for sending HTTP nsidering interaction with HTML forms, different packages compatible with Python can be utilized. One such example is Selenium, a framework designed for web browser automation. It allows you to enter a browser and perform human-being tasks such as clicking buttons or filling out forms. Besides, Selenium gives you access to a headless browser, which is a web browser without a graphical user interface, making data scraping even more using Python for public web scraping, you should be aware of a couple of perks and limitations associated with this programming language. First of all, it’s suitable for both beginners and advanced programmers. Python has a simple syntax, and dynamic typing helps pick up while providing enough features for all but the most demanding one of the most used programming languages for web scraping, Python stands out with its huge community and a wide range of tools and libraries. Thanks to that, finding help when in need or making improvements related to web scraping might be a breeze if you use, Python is capable of all task management techniques: multithreading, multiprocessing, and asynchronous programming. Specifically, multithreading enables several threads to run at a time, and multiprocessing is the ability of an operating system to run several programs simultaneously. In terms of asynchronous programming, operations can work independently from other processes. All this combined enhances the efficiency of it comes to shortcomings, Python has limited performance when compared to statically typed languages like C++. As an improvement to that, you can integrate critical sections written in faster programming languages to mitigate most of the performance also requires slightly more work to scale properly due to Global Interpreter Lock (GIL), which works as a lock that allows only one thread to run at a time. As a result, some tasks might be executed, the nature of dynamic typing usually leaves more room for mistakes that would otherwise be caught during compilation, a process of turning a programming language into a language understandable for computers. Yet, type-hints and static type-checkers like MyPy can help prevent such Script is a famous programming language that almost every web developer is familiar with. Thus, the learning curve for getting started with web scraping using JavaScript is usually low for most web JavaScript is very popular, there are many resources on the internet that anyone can use to learn the language. What’s more, this programming language is relatively fast, versatile, and can be used for a wide range of milar to Python, the JavaScript code can be written in any code editor, including Visual Studio Code, Atom, and Sublime Text. To use JavaScript for your public web scraping projects, you’ll have to install from the official download page., a powerful JavaScript runtime, will provide developers with a set of tools to scrape publicly available data from websites with minimal Package Manager (NPM) also features many useful libraries, such as Axios, Cheerio, JSDOM, Puppeteer, and Nightmare, that make web scraping using JavaScript a breeze. Axios is a popular promise-based HTTP client package used to send HTTP requests, while Cheerio and JSDOM are tools that make parsing the retrieved HTML page and manipulating the DOM easier. Puppeteer and Nightmare are high-level libraries that allow you to programmatically control headless browsers to scrape both static and dynamic content from web pages. Getting started with these tools is quite easy, and you can get help from their documentation mming up, the general process of web scraping with JavaScript is similar to web scraping with Python. First, you pick a target URL that you want to extract publicly available data from. Then, using the available tools, you fetch the web page, extract the data, process it, and then save it in a useful and foremost, JavaScript excels at its speed, as is based on a powerful Chrome V8 engine. Its event-based model and non-blocking Input/Output (I/O) optimizes memory usage; thus, can efficiently handle many concurrent web page requests at a, libraries written to be run natively on might be quite fast and help you improve the overall development workflow. For example, Gulp can assist in task automation, while Cheerio aids in working with asynchronous JavaScript. Other instances of such libraries include Async, Express, and, standard libraries often leave users wanting additional tools to make working with JavaScript quicker and easier. Since JavaScript carries a vast community, there are a lot of community-driven packages available for the limitations of JavaScript, one flaw of using Javascript for web scraping is that doesn’t perform very well when handling sizeable CPU-based computing tasks due to its single-threaded and event-driven nature. However, the “worker threads” module, introduced in 2018, makes it possible to execute multiple threads uses callbacks extensively as a result of its asynchronous approach. Unfortunately, this often results in a situation known as callback hell, where callback nesting goes several layers deep, making the code quite challenging to understand and maintain. Nevertheless, you can avoid this issue by using proper coding standards or the recently introduced async/await syntax that handles the asynchronicity without relying on like Python, JavaScript is a dynamically typed language. Hence, it’s also essential to watch out for bugs that may occur at runtime. As a way out, programmers who have experience with a statically typed language can choose to work with Typescript, a superset of JavaScript that supports type checking. Typescript is compiled to JavaScript and makes it easier to spot and handle type errors before is more widely used for web scraping purposes due to the popularity and ease of using the Beautiful Soup library, making it simple to navigate and search through parse trees. Yet, JavaScript might be a better option for programmers who already have experience with this programming language. Whether you’re working with Python or JavaScript, the process of scraping data from a web page remains the same. That is, you send a request to the publicly available page you want to scrape, parse the response, and save the data in a useful ’s a quick table showing how Python compares to JavaScript for web we have seen, both Python and JavaScript are excellent options for public web scraping. They are pretty easy to learn and work with and have many useful libraries that make it simple to scrape publicly available data from hope this article has helped you to see how Python and JavaScript compare for web scraping. If you want to learn more about web scraping with Python and JavaScript, check out these detailed articles on Python Web Scraping and JavaScript Web Scraping. You can also learn how to get started with Puppeteer from this article.

Web-scraping JavaScript page with Python – Stack Overflow

I personally prefer using scrapy and selenium and dockerizing both in separate containers. This way you can install both with minimal hassle and crawl modern websites that almost all contain javascript in one form or another. Here’s an example:

Use the scrapy startproject to create your scraper and write your spider, the skeleton can be as simple as this:

import scrapy

class MySpider():

name = ‘my_spider’

start_urls = [”]

def start_requests(self):

yield quest(art_urls[0])

def parse(self, response):

# do stuff with results, scrape items etc.

# now were just checking everything worked

print()

The real magic happens in the Overwrite two methods in the downloader middleware, __init__ and process_request, in the following way:

# import some additional modules that we need

import os

from copy import deepcopy

from time import sleep

from scrapy import signals

from import HtmlResponse

from selenium import webdriver

class SampleProjectDownloaderMiddleware(object):

def __init__(self):

SELENIUM_LOCATION = (‘SELENIUM_LOCATION’, ‘NOT_HERE’)

SELENIUM_URL = f'{SELENIUM_LOCATION}:4444/wd/hub’

chrome_options = romeOptions()

# d_experimental_option(“mobileEmulation”, mobile_emulation)

= (command_executor=SELENIUM_URL,

_capabilities())

def process_request(self, request, spider):

()

# sleep a bit so the page has time to load

# or monitor items on page to continue as soon as page ready

sleep(4)

# if you need to manipulate the page content like clicking and scrolling, you do it here

# (”)()

# you only need the now properly and completely rendered html from your page to get results

body = deepcopy()

# copy the current url in case of redirects

url = deepcopy()

return HtmlResponse(url, body=body, encoding=’utf-8′, request=request)

Dont forget to enable this middlware by uncommenting the next lines in the file:

DOWNLOADER_MIDDLEWARES = {

‘mpleProjectDownloaderMiddleware’: 543, }

Next for dockerization. Create your Dockerfile from a lightweight image (I’m using python Alpine here), copy your project directory to it, install requirements:

# Use an official Python runtime as a parent image

FROM python:3. 6-alpine

# install some packages necessary to scrapy and then curl because it’s handy for debugging

RUN apk –update add linux-headers libffi-dev openssl-dev build-base libxslt-dev libxml2-dev curl python-dev

WORKDIR /my_scraper

ADD /my_scraper/

RUN pip install -r

ADD. /scrapers

And finally bring it all together in

version: ‘2’

services:

selenium:

image: selenium/standalone-chrome

ports:

– “4444:4444”

shm_size: 1G

my_scraper:

build:.

depends_on:

– “selenium”

environment:

– SELENIUM_LOCATION=samplecrawler_selenium_1

volumes:

-. :/my_scraper

# use this command to keep the container running

command: tail -f /dev/null

Run docker-compose up -d. If you’re doing this the first time it will take a while for it to fetch the latest selenium/standalone-chrome and the build your scraper image as well.

Once it’s done, you can check that your containers are running with docker ps and also check that the name of the selenium container matches that of the environment variable that we passed to our scraper container (here, it was SELENIUM_LOCATION=samplecrawler_selenium_1).

Enter your scraper container with docker exec -ti YOUR_CONTAINER_NAME sh, the command for me was docker exec -ti samplecrawler_my_scraper_1 sh, cd into the right directory and run your scraper with scrapy crawl my_spider.

The entire thing is on my github page and you can get it from here

Frequently Asked Questions about scraping using javascript

Can you do web scraping with JavaScript?

Thanks to Node. js, JavaScript is a great language to use for a web scraper: not only is Node fast, but you’ll likely end up using a lot of the same methods you’re used to from querying the DOM with front-end JavaScript.

Is Python or JavaScript better for web scraping?

JavaScript compared. Python is more widely used for web scraping purposes due to the popularity and ease of using the Beautiful Soup library, making it simple to navigate and search through parse trees. Yet, JavaScript might be a better option for programmers who already have experience with this programming language.Jun 22, 2021

How do you scrape data from JavaScript in Python?

This library intends to make parsing HTML (e.g. scraping the web) as simple and intuitive as possible.Install requests-html: pipenv install requests-html.Make a request to the page’s url: from requests_html import HTMLSession session = HTMLSession() r = session.get(a_page_url)More items…